The client secret of the service principal used to invoke the endpoint The client ID of the service principal used to invoke the endpoint This token will be used to invoke the endpoint later. Authorize: It's a Web Activity that uses the service principal created in Authenticating against batch endpoints to obtain an authorization token.You can use the default Azure Machine Learning data store, named workspaceblobstore.Īzureml://datastores/workspaceblobstore/paths/batch/predictions.csv It must be a path to an output file in a Data Store attached to the Machine Learning workspace. Currently batch endpoints support folders ( UriFolder) and File ( UriFile). The type of the input data you are providing. Alternative, if using Data Stores, ensure the credentials are indicated there. Ensure that the manage identity you are using for executing the job has access to the underlying location. The number of seconds to wait before checking the job status for completion. The pipeline requires the following parameters to be configured: Parameter Wait: It's a Wait Activity that controls the polling frequency of the job's status.Check status: It's a Web Activity that queries the status of the job resource that was returned as a response of the Run Batch-Endpoint activity.This activity, in turns, uses the following activities: Wait for job: It's a loop activity that checks the status of the created job and waits for its completion, either as Completed or Failed.It passes the input data URI where the data is located and the expected output file.

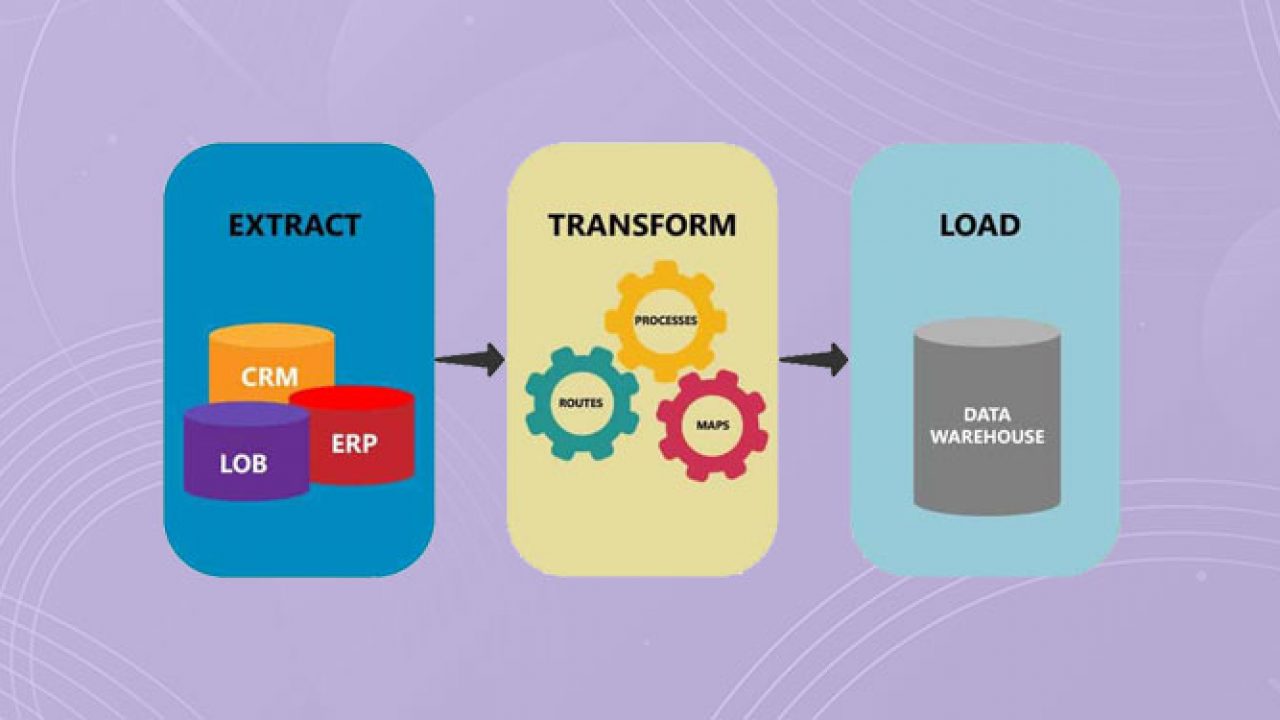

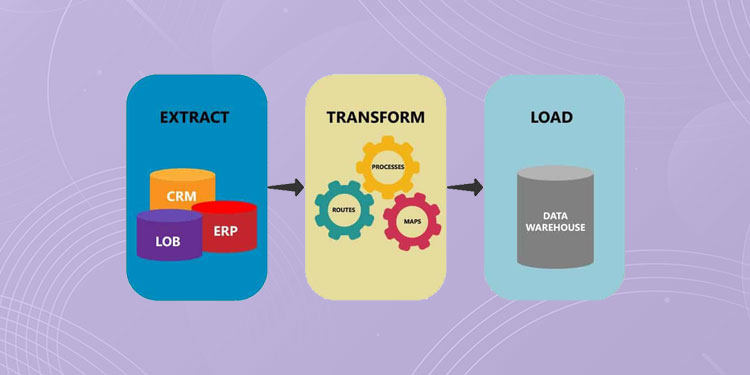

Run Batch-Endpoint: It's a Web Activity that uses the batch endpoint URI to invoke it.It is composed of the following activities: To know more about how to use the REST API of batch endpoints read Create jobs and input data for batch endpoints. The pipeline will communicate with Azure Machine Learning batch endpoints using REST. We are going to create a pipeline in Azure Data Factory that can invoke a given batch endpoint over some data. In this example the service principal will require: Grant access for the service principal you created to your workspace as explained at Grant access. Take note of the client ID and the tenant id in the Overview pane of the application. Take note of the client secret Value that is generated. Permissions to read in any cloud location (storage account) indicated as a data input.Ĭreate a service principal following the steps at Register an application with Microsoft Entra ID and create a service principal.Ĭreate a secret to use for authentication as explained at Option 3: Create a new client secret.Permissions to read/write in data stores.Permission in the workspace to read batch deployments and perform actions over them.Once deployed, grant access for the managed identity of the resource you created to your Azure Machine Learning workspace as explained at Grant access. Once the resource is created, you will need to recreate it if you need to change the identity of it. Notice that changing the resource identity once deployed is not possible in Azure Data Factory. We recommend using a managed identity as it simplifies the use of secrets. You can use a service principal or a managed identity to authenticate against Batch Endpoints. Batch endpoints support Microsoft Entra ID for authorization and hence the request made to the APIs require a proper authentication handling. Select Open on the Open Azure Data Factory Studio tile to launch the Data Integration application in a separate tab.Īzure Data Factory can invoke the REST APIs of batch endpoints by using the Web Invoke activity. If you have not created your data factory yet, follow the steps in Quickstart: Create a data factory by using the Azure portal and Azure Data Factory Studio to create one.Īfter creating it, browse to the data factory in the Azure portal: Particularly, we are using the heart condition classifier created in the tutorial Using MLflow models in batch deployments.Īn Azure Data Factory resource created and configured. This example assumes that you have a model correctly deployed as a batch endpoint. In this example, learn how to use batch endpoints in Azure Data Factory activities by relying on the Web Invoke activity and the REST API. Batch endpoints are an excellent candidate to become a step in such processing workflow. Azure Data Factory is a managed cloud service that's built for these complex hybrid extract-transform-load (ETL), extract-load-transform (ELT), and data integration projects.Īzure Data Factory allows the creation of pipelines that can orchestrate multiple data transformations and manage them as a single unit. APPLIES TO: Azure CLI ml extension v2 (current) Python SDK azure-ai-ml v2 (current)īig data requires a service that can orchestrate and operationalize processes to refine these enormous stores of raw data into actionable business insights.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed